Yesterday I covered the implementation details of DateTime and SmallDateTime datatypes in SQL Server 2005. I approached the issue of testing dates to see if they fell on a particular date… but then stopped-short of some fairly useful (but arcane) stuff about rounding dates.

Yesterday I covered the implementation details of DateTime and SmallDateTime datatypes in SQL Server 2005. I approached the issue of testing dates to see if they fell on a particular date… but then stopped-short of some fairly useful (but arcane) stuff about rounding dates.

So let’s imagine a scenario. We take orders and store the date-and-time at which they are received… but later on we want to report on ‘Orders that occurred yesterday’… or today. It’s not uncommon. How do we get ‘today’ from a function like getdate() or CURRENT_TIMESTAMP?

I’m going to start by summarising some options presented elsewhere, and then we’ll look at my alternative method of rounding.

Two Common Rounding Options

Run the following SQL:

SELECT CONVERT(CHAR(8), GETDATE(), 112) SELECT DATEADD(DAY, 0, DATEDIFF(DAY, 0, GETDATE()))

…and you should get results something like this:

20090830 NB: This has been converted to a string 2009-08-30 00:00:00.000 ...where this result is still a DateTime internally

So, in each case, the time portion of the date has been removed:

- The first option literally converts the date to a string representation (happily one that the system will be prepared to convert back to a DateTime);

- The second option finds the whole number of days between getdate() and 0 (0 as you may recall being 1/1/1900 for a DateTime), then adds that number back to 0. As the DATEDIFF function gives us WHOLE days, it indirectly does the rounding for us!

It might be interesting to note at this point that Sql Server has allowed us to use an integer, 0, as a DateTime! And yes, it turns out on the CAST and CONVERT Books Online page that there is indeed an implicit conversion between the two.

An Alternative Rounding Option

Here’s my approach to rounding DateTimes. I suspect I know about this from my days using Oracle, but I am not certain where it originates from; though historically my impression on how DateTimes were stored was that they were really FLOATs. This impression was incorrect for Sql Server, but perhaps was correct for another DB.

SELECT CAST(FLOOR(CAST(GETDATE() AS FLOAT)) AS DATETIME)

-- Results in the following:

2009-08-30 00:00:00.000

How this works is as follows: Firstly we convert our DateTime to a FLOAT. Conceptually, this is a whole number of days since (or before) 1/1/1900, with the fractional part of the time since midnight added to that. We FLOOR that value to take it to the nearest smaller integer. Some comparisons may allow us to use that ‘day number’ value, but generally it is best to CAST it back to a DateTime**. You may also like to refer to my last post where the bottom section has a graphical representation of the conversion of DateTimes to floats.

Speed Comparisons in a Table-Scan Situation

In this section I am very much beholden to the work already done by Tibor Karaszi on his blog page focussing on DateTime. Performance comparisons here are based on his SQL at info_datetime_rid_sec_perf_v2.asp. I have updated that SQL to provide a comparison with my method: SqlServerDatTimeConversions_1.sql.

What this code does is create a 10,000,000 row table in tempdb with DateTimes in each row. Then, SQL is run to scan the table, grouping the results by date (there is just one date, today’s) and counting the number of rows. The different methods are outlined in the following SQL fragment:

SELECT CONVERT(CHAR(8), GETDATE(), 112) -- character string conversion SELECT DATEADD(DAY, 0, DATEDIFF(DAY, '', GETDATE())) -- reference to 0/'' base date SELECT DATEADD(DAY, DATEDIFF(DAY, '20040101', GETDATE()), '20040101') -- reference to '20040101' SELECT DATEADD(DAY, 0, DATEDIFF(DAY, 0, GETDATE())) -- reference to 0

Results on my machine are as follows, produced from averaging the times from 5 separate runs:

| Conversion Method | Time (ms) [Avg of 5 runs] | Elapsed Time Performance (compared to baseline string conversion method) |

|---|---|---|

| Baseline String Conversion | 8464 | x1 |

| DATEADD Alternative 1 | 3653 | x2.31 |

| DATEADD Alternative 2 | 3217 | x2.63 |

| DATEADD Alternative 3 | 3748 | x2.25 |

| Cast / Floor / Cast Method | 3598 | x2.35 |

So, all the methods hover around 2.5x faster than the baseline CONVERT(CHAR(8), x, 112) method, 2nd DATEADD method is fastest consistently (it looks like this: DATEADD(DAY, DATEDIFF(DAY, '20040101', date_time), '20040101'), where ‘20040101’ is just a consistent ‘base’ date to compare against).

A couple of side-notes – if we remove the OPTION (MAXDOP 1) from the SQL, the elapsed times on my quad-core cpu, but the total CPU used remains about the same. Also an note here is that Sql Server Execution Plan estimates the cost for all 5 attempts as being exactly the same, rating each as having taken 20% of the ‘batch cost’!

An Alternative Test Suite

The tests above are a great way to test the relative performance of the conversion methods, but I felt they emphasised poor data comparison technique for certain types of query, something that Tibor does mention on his DateTime page. I wanted to create an alternative table that would – at least to a small extent – emphasize the difference between index and table, and enable me to compare performance of ‘bad’ comparisons with good ones in the query plan.

The alternative test SQL file SqlServerDateTimeConversions_2.sql creates a temporary table with 10M rows, approximately one per second for the last 50 and next 65 days. This means we now have an equivalent to our notional order table, so we might want to ask the question ‘How many orders did we have yesterday?’

Index Seeks versus Index Scans

For my first test, I wanted to compare what I consider a bad date comparison technique with a good one… while actually using the ‘worst’ technique to convert dates.

-- 'Bad' comparison technique - conversion of column value forces full scan CONVERT(CHAR(8), date_time, 112) = CONVERT(CHAR(8), GETDATE() - 1, 112) -- GETDATE() - 1 is Yesterday -- 'Better' comparison technique. We change our test to leave the column value unchanged WHERE date_time >= CONVERT(CHAR(8), GETDATE() - 1, 112) -- GETDATE() - 1 is Yesterday AND date_time < CONVERT(CHAR(8), GETDATE(), 112)

In tests on my machine, the latter code ran about 46 times faster! It used about 4000ms less CPU, and 23000 fewer ‘logical reads’ than the first comparison. This is because the second method did not alter the value found in the table column at all. By providing <= and < tests against that column value, we could ‘seek’ into the index very quickly to find the relevant start of the range, and then scan through the index from there. All we had to do was count the matching rows in the index. Sql Server probably did just two conversions of the DateTime that you can see in the SQL.

In contrast, because the former sql converted the column value, although the index was used, the whole index had to be scanned, and for each and every row the conversion had to be done, and then the test could be run. This means that not only did the slower query have to read much more data (about 100x more), it had to do around 10,000,002 conversions (one for each row of data, and two as they appeared in the SQL).

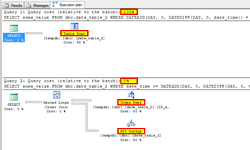

Looking at the execution plan for the two queries, we can see that the difference is subtle. Certainly, the first query is rated at taking 99% of the work of the batch of two queries, but the real difference is the ‘Index Scan’ vs. the ‘Index Seek’, which I’ve highlighted in the screenshot.

Index Seeks with RID Lookup versus Table Scans

For the second test, I use one of the DATEADD date conversion methods, again contrasting a date-comparison approach where the column value is converted, vs. one where it is not. This time, however, we are selecting the ‘some_value’ character column from the table, which is not covered by our single index.

Once again, the ‘bad’ comparison method is reported as being substantially worse than the better one; though this time with less dramatic differences than when the index ‘covered’ our result set. This is because, while the index could be ‘seeked’ into to quickly find references to the original record, we then had to do a ‘RID Lookup’ which takes an additional read to get the actual data. For me, the second piece of SQL ran about 3x faster… though the Execution Plan actually showed a more substantial difference! We’re probably in the realm now that one piece of SQL running affects the other one (e.g. by loading pages into cache), however, the optimiser should guess its way through when to use the index seek + RID Lookup approach, or a full table-scan. The Execution Plan screenshot is shown to the right.

Summary

I hope that I have left you with a strong impression that converting a column value to match a test value is a Bad Thing, and this is especially true with DateTime values. Although the CAST/FLOOR/CAST method I have tended to use is not the fastest, it is reasonable and a useful tool for the toolbox.

If you want to know more about table and index organisation, I would strongly recommend ‘Inside Microsoft Sql Server 2005: T-SQL Querying’ by Itzik Ben-Gan, and another book in that series already had a mention.

Notes

* If this seems complex, and I guess it is to some extent, then we could think of alternative ways to get that ‘day number’ even more quickly (though it is possible that Sql Server is optimising some of this for us) – e.g. perhaps we could strip out the first four bytes and just use them directly?